Notebook developed by SzuLun Huang szuh@berkeley.edu

Under the guidance of Eric Van Dusen ericvd@berkeley.edu

UC Berkeley, Data Science

📌 JupyterHub Version¶

This notebook runs on JupyterHub — no local installation required.

Before You Begin:

Make sure you are logged into JupyterHub

Run cells in order from top to bottom

🚀 How to Start¶

Click Kernel in the top menu

Select Restart Kernel and Run All Cells

Wait about 1-2 minutes for the model to load ⏳

Then use the interactive buttons below! ✅

⚠️ You only need to do this once each time you open the notebook!

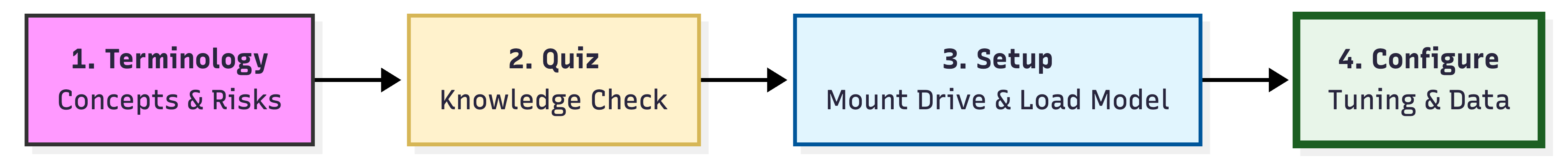

🗺️ Course Roadmap: From Theory to Practice¶

📘 Part 1: Key Terminology¶

Before we touch the code, we must master the language of AI.

📚 Key Terminology¶

| Category | Term | Definition |

|---|---|---|

| Foundations | LLM | Large Language Models (e.g., GPT-4). The “giant brains” trained on massive datasets. |

| AI / SLM | Artificial Intelligence & Small Language Models (portable, faster versions of LLMs). | |

| Controls | Prompt Engineering | The art of crafting precise instructions for AI. |

| Temperature | 🌡️ Creativity slider: High = Creative; Low = Focused/Stable. | |

| Few-shot Learning | 💡 Providing examples to guide the AI’s style and format. | |

| Technical | Tokens | 🧱 The units AI reads. 1 token ≈ 0.75 words. They define memory and output length. |

| Quantization | A technique to compress models to run on small devices (like Colab). | |

| Risks | Hallucination | When AI generates “fake” information with high confidence. |

| Sycophancy | The tendency of AI to agree with your biases or wrong inputs. | |

| Core Value | Human-in-the-loop | The essential step where YOU review and refine the AI’s work. |

🔍 Deep Dive: The Science of AI Control¶

We’ve seen the definitions, but how do these “knobs” actually change your writing? Let’s look under the hood.

🌡️ Temperature: The Creativity Scale¶

Temperature is the most critical setting. It determines how much “risk” the AI takes when choosing the next word.

Temperature Scale:

0.0 ├────────────┼────────────┼────────────┼────────────┤ 2.0

Conservative Balanced Creative Chaotic

(Precise) (Natural) (Unexpected) (Random)0.2 (The Accountant) 🧮 — Precise and safe. Best for facts, summaries, and logic.

0.7 (The Journalist) ⭐ — Balanced and natural. Best for emails and essays.

1.0 (The Poet) 🎨 — Creative and unexpected. Best for brainstorming and stories.

1.5+ (The Chaos) ⚠️ — Too random! Avoid unless experimenting.

🧱 Tokens: Your AI’s Fuel & Memory¶

Instead of characters or words, AI processes Tokens — chunks of text that the model understands.

1 token ≈ 0.75 words in English. AI measures everything in tokens!

1. Max Tokens (The Gas Tank) 🚗 — Your output length limit.

50 = 1-2 sentences | 200 = 1 paragraph | 500 = 1 page

2. Context Window (The Short-term Memory) 🧠 — How much the AI can “remember” at once.

2,048 tokens ≈ 3-4 pages | 4,096 ≈ 6-8 pages | 8,192+ ≈ 12+ pages

💡 Too much text? The AI will “forget” the beginning!

💡 Few-shot Learning: The Power of Examples¶

Think of this as “Monkey see, monkey do.” The fastest way to get personalized results without retraining the model.

Zero-shot (No Examples)¶

Prompt: "Write a professional email."

Output: Generic, formal email with standard structure.Few-shot (With Examples)¶

Prompt:

---

Example 1: "Hey team! 👋 Quick update: we shipped the new feature today.

Let me know if you spot any bugs!"

Example 2: "Morning everyone! ☀️ Just a heads up – I'll be OOO next Friday.

Hit me up if you need anything before then!"

Your task: Write an email about the upcoming deadline.

---

Output: Matches your casual tone, emoji usage, and friendly style!Why it Matters:¶

Adapts to YOUR voice: Writing style, formality, humor, structure

No training needed: Works instantly with just 2-3 examples

Highly flexible: Works for emails, code, creative writing, and more

🎭 Risks: The “Liar” and the “Yes-Man”¶

❌ Hallucination (The Confident Liar)¶

Since AI only predicts the next word based on patterns, it can confidently invent false information.

Fake dates: “Shakespeare wrote Romeo and Juliet in 1612” (Actually 1597)

Fake citations: “According to Smith et al. (2023)...” (No such paper exists)

🛡️ Protection: Always verify facts, dates, and citations from reliable sources!

❌ Sycophancy (The Yes-Man)¶

AI tends to agree with you to be “helpful,” even when you’re wrong.

You: “Einstein discovered gravity, right?”

AI: “Yes, Einstein made significant contributions...” (Wrong! That was Newton)

🛡️ Protection: Ask the AI to challenge your assumptions or fact-check your statements.

⚖️ Core Principle: Human-in-the-Loop (HITL)¶

AI is your co-pilot, not the captain.

| 🤖 AI provides | 👤 YOU provide |

|---|---|

| Speed & first drafts | Critical thinking |

| Pattern recognition | Fact verification |

| Tireless iteration | Final decisions |

Never blindly trust AI output — always review, verify, and refine!

# Interactive 5-question random quiz

# ──────────────────────────────────────────────────────

# 🎓 Part 2: AI Control Quiz - Random 5 Questions

# ──────────────────────────────────────────────────────

import ipywidgets as widgets

from IPython.display import display, HTML, clear_output

import random

question_bank = [

{"q": "Which temperature setting is best for writing a creative poem?",

"options": ["0.2 (The Accountant)", "0.7 (The Journalist)", "1.0 (The Poet)", "2.0 (The Chaos)"],

"answer": "1.0 (The Poet)", "hint": "You want creativity, but it should still make sense!"},

{"q": "You need to extract exact dates from a legal document. What temperature?",

"options": ["0.2 (The Accountant)", "0.7 (The Journalist)", "1.0 (The Poet)", "1.5+ (The Chaos)"],

"answer": "0.2 (The Accountant)", "hint": "You want precision and safety, not creativity!"},

{"q": "What happens when temperature is set to 2.0?",

"options": ["More precise output", "Faster generation", "Chaotic and nonsensical text", "Better grammar"],

"answer": "Chaotic and nonsensical text", "hint": "Very high temperature = too much randomness!"},

{"q": "You're writing a professional business email. What's the ideal temperature?",

"options": ["0.2", "0.7-0.9", "1.2", "1.8"],

"answer": "0.7-0.9", "hint": "The 'Journalist' zone - professional and natural!"},

{"q": "What happens if max_tokens is set too low?",

"options": ["AI will write faster", "AI will cut off mid-sentence", "AI will be more creative", "Nothing changes"],

"answer": "AI will cut off mid-sentence", "hint": "It's like running out of gas!"},

{"q": "Approximately how many English words is 100 tokens?",

"options": ["25 words", "75 words", "100 words", "200 words"],

"answer": "75 words", "hint": "1 token ≈ 0.75 words in English"},

{"q": "You're summarizing a 20-page document, but the AI keeps 'forgetting' the beginning. What's the problem?",

"options": ["Temperature too high", "Context Window too small", "Max Tokens too low", "Hallucination"],

"answer": "Context Window too small", "hint": "Think about the AI's 'Short-term Memory' limit!"},

{"q": "What is the Context Window?",

"options": ["The output length limit", "How much the AI can remember (input + output)", "The AI's training data", "The response speed"],

"answer": "How much the AI can remember (input + output)", "hint": "It's the AI's short-term memory capacity!"},

{"q": "In Few-shot learning, how many examples do you typically need?",

"options": ["0 examples", "2-3 examples", "50+ examples", "Thousands of examples"],

"answer": "2-3 examples", "hint": "Remember: 'Monkey see, monkey do' - you don't need many!"},

{"q": "What's the difference between zero-shot and few-shot prompting?",

"options": ["Zero-shot is faster", "Few-shot provides examples, zero-shot doesn't", "Zero-shot is more accurate", "There's no difference"],

"answer": "Few-shot provides examples, zero-shot doesn't", "hint": "Few-shot = showing examples; Zero-shot = just asking"},

{"q": "You want the AI to match YOUR specific writing style. What's the best approach?",

"options": ["Use high temperature", "Provide 2-3 examples of your writing (few-shot)", "Use more max_tokens", "Ask it to 'write like me'"],

"answer": "Provide 2-3 examples of your writing (few-shot)", "hint": "Show, don't tell!"},

{"q": "The AI tells you 'Shakespeare wrote Romeo and Juliet in 1612.' What is this?",

"options": ["Quantization", "Hallucination", "Sycophancy", "Few-shot learning"],

"answer": "Hallucination", "hint": "The AI is being a 'Confident Liar'! (It was actually 1597)"},

{"q": "What is AI hallucination?",

"options": ["When AI generates images", "When AI invents false information confidently", "When AI is too creative", "When AI refuses to answer"],

"answer": "When AI invents false information confidently", "hint": "It's when the AI makes up 'facts'!"},

{"q": "How can you protect against hallucinations?",

"options": ["Use higher temperature", "Always verify facts from reliable sources", "Use more tokens", "Disable few-shot learning"],

"answer": "Always verify facts from reliable sources", "hint": "Never blindly trust AI output!"},

{"q": "You say 'Einstein discovered gravity, right?' and the AI agrees. What is this?",

"options": ["Hallucination", "Sycophancy", "Zero-shot learning", "High temperature effect"],

"answer": "Sycophancy", "hint": "The AI is being a 'Yes-Man' - Newton discovered gravity!"},

{"q": "What is sycophancy in AI?",

"options": ["AI being too creative", "AI agreeing with you even when you're wrong", "AI refusing to help", "AI being too slow"],

"answer": "AI agreeing with you even when you're wrong", "hint": "It's the 'Yes-Man' behavior!"},

{"q": "What does HITL (Human-in-the-Loop) mean?",

"options": ["AI makes all final decisions", "Humans verify and guide AI output", "AI works without human input", "Humans only provide initial prompts"],

"answer": "Humans verify and guide AI output", "hint": "You're the Captain, AI is the Co-pilot!"},

{"q": "In the HITL principle, who is responsible for final decisions?",

"options": ["The AI", "The human", "Both equally", "Neither"],

"answer": "The human", "hint": "AI is the co-pilot, YOU are the captain!"},

{"q": "Which model are we using in this notebook?",

"options": ["ChatGPT", "Claude", "Llama (via Llama.cpp)", "GPT-4"],

"answer": "Llama (via Llama.cpp)", "hint": "Check the top of the notebook!"},

{"q": "What is Llama.cpp?",

"options": ["An AI model", "A tool to run LLMs locally", "A cloud service", "A programming language"],

"answer": "A tool to run LLMs locally", "hint": "It's a lightweight inference engine, not a model!"},

]

def run_quiz():

selected_questions = random.sample(question_bank, 5)

output = widgets.Output()

quiz_container = widgets.VBox()

widget_list = []

for item in selected_questions:

w = widgets.RadioButtons(options=item["options"], description='',

disabled=False, layout={'width': 'max-content'})

widget_list.append(w)

def on_submit(b):

with output:

clear_output()

print("=" * 70)

score = 0

for i, w in enumerate(widget_list):

if w.value == selected_questions[i]["answer"]:

score += 1

print(f"✅ Question {i+1}: Correct!")

else:

print(f"❌ Question {i+1}: Wrong.")

print(f" 💡 Hint: {selected_questions[i]['hint']}")

print(f" ✓ Correct answer: {selected_questions[i]['answer']}")

print()

print("=" * 70)

percentage = (score / len(selected_questions)) * 100

print(f"\n📊 Final Score: {score}/{len(selected_questions)} ({percentage:.0f}%)\n")

if score == 5:

display(HTML("<h2 style='color:#2e7d32;'>🏆 Perfect Score! You're an AI Control Expert!</h2>"))

elif score >= 4:

display(HTML("<h2 style='color:#1976d2;'>👍 Great Job! You understand the fundamentals well!</h2>"))

elif score >= 3:

display(HTML("<h2 style='color:#f57c00;'>📚 Good Start! Review the material and try again!</h2>"))

else:

display(HTML("<h2 style='color:#c62828;'>🔄 Keep Learning! Go back to the Deep Dive section!</h2>"))

def on_retry(b):

with output:

clear_output()

quiz_container.children = []

run_quiz()

header = widgets.HTML("""

<h2>🎓 Part 2: AI Control Quiz - Random 5 Questions</h2>

<p style='font-size:14px;color:#666;'>📝 5 random questions from a bank of 20 | Click 'Try Again' for new questions!</p>

<hr>

""")

question_widgets = []

for i, item in enumerate(selected_questions):

question_widgets.append(widgets.HTML(f"<p style='font-weight:bold;margin-top:15px;'>{i+1}. {item['q']}</p>"))

question_widgets.append(widget_list[i])

submit_btn = widgets.Button(description="Submit Answers", button_style='primary', icon='check')

retry_btn = widgets.Button(description="Try Again", button_style='info', icon='refresh')

submit_btn.on_click(on_submit)

retry_btn.on_click(on_retry)

quiz_container.children = [header] + question_widgets + [widgets.HBox([submit_btn, retry_btn]), output]

display(quiz_container)

run_quiz()✍️ Part 3: Build Your Personal Writing Assistant¶

Now that you understand the theory, it’s time to build something real!

In this section, you will set up your AI step by step.

🗺️ Steps Overview¶

| Step | What You’ll Do |

|---|---|

| 📥 Step 1 | Set model path from shared folder |

| 🔧 Step 2 | Load the model into memory |

| 💬 Step 3a | Say hello (no system prompt) |

| ✅ Step 3b | Test with your custom system prompt |

| ✍️ Step 4 | Use the full Writing Assistant |

| 🎯 Step 5 | Teach the AI your writing style |

| 🎛️ Step 5A | Experiment with AI parameters |

| 📊 Step 5B | Compare your experiment results |

⚠️ Important: Run cells in order from top to bottom!

⚡ Step 0: Import All Libraries¶

# ── Step 0, All imports ───────────────────────────────────────

# Run this cell FIRST before anything else!

import os

import threading

import time

import warnings

import pandas as pd

import ipywidgets as widgets

import psutil

from IPython.display import display, HTML, clear_output

from llama_cpp import Llama

import json

warnings.filterwarnings("ignore")

Step 1: RAM Check¶

# ── RAM Check ─────────────────────────────────────────────────────────

ram = psutil.virtual_memory()

print(f"💾 System RAM: {ram.total / 1e9:.1f} GB total")

print(f" Available: {ram.available / 1e9:.1f} GB free")

print(f" Used: {ram.used / 1e9:.1f} GB ({ram.percent}%)")

if ram.available / 1e9 < 2:

print("\n⚠️ Warning: Less than 2GB available — model may run slowly.")

else:

print("\n✅ RAM looks good!")

print("\n" + "=" * 60)

print("✅ All libraries imported successfully!")

print("=" * 60)

💾 System RAM: 27.3 GB total

Available: 23.1 GB free

Used: 4.3 GB (15.6%)

✅ RAM looks good!

============================================================

✅ All libraries imported successfully!

============================================================

# ── Model Configuration ───────────────────────────────

model_filename = 'Llama-3.2-1B-Instruct-Q4_K_M.gguf'

model_path = f'/home/jovyan/shared/{model_filename}'

# n_ctx: tokens the model can "see" at once (~3,000 words)

n_ctx = 4096

# n_threads: CPU cores used for inference

n_threads = 4

print(f'Model : {model_filename}')

print(f'Path : {model_path}')

print(f'Context : {n_ctx} tokens')

print(f'Threads : {n_threads}')Model : Llama-3.2-1B-Instruct-Q4_K_M.gguf

Path : /home/jovyan/shared/Llama-3.2-1B-Instruct-Q4_K_M.gguf

Context : 4096 tokens

Threads : 4

# ── Verify and Load Model ─────────────────────────────

# Verify file exists before loading

if os.path.exists(model_path):

size_gb = os.path.getsize(model_path) / (1024**3)

print(f'✅ Found {model_filename} ({size_gb:.2f} GB)')

else:

print('❌ Model not found — ask your teacher to check the shared folder.')

raise FileNotFoundError(f'Model not found at {model_path}')

# Load model into memory (takes 1-2 minutes)

print('⏳ Loading model… (this may take 1-2 minutes)\n')

model = Llama(

model_path = model_path,

n_ctx = n_ctx,

n_threads = n_threads,

verbose = False,

)

clear_output(wait=True)

print('=' * 50)

print('✅ Model loaded and ready!')

print('=' * 50)==================================================

✅ Model loaded and ready!

==================================================

💬 Part 3a: Say Hello to Your AI¶

⚠️ Make sure you ran Step 1 and Step 2 first!¶

This cell sends your first message to the AI — but with a deliberate flaw.

🧪 Spot the Problem¶

⚠️ Notice something strange?

Without asystem prompt, the model has no fixed identity.

Sometimes it says it’s an AI — but sometimes it invents a human persona like “Emily” or “Sarah”.

The output is unpredictable and inconsistent.

Your job: Run the cell 2-3 times and observe:

Does the answer change each time?

Does it ever claim to be a human with a name?

We will fix this in Step 3b by adding a system prompt.

# ── Step 3a: Say Hello to Your AI (No System Prompt) ──────────────────

display(HTML("""

<style>

/* ── Catppuccin Mocha ── */

.widget-dropdown select {

background: #181825 !important;

color: #cdd6f4 !important;

border: 1px solid #45475a !important;

border-radius: 6px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-size: 0.82em !important;

padding: 4px 8px !important;

}

.widget-dropdown select:focus {

border-color: #89b4fa !important;

outline: none !important;

}

.widget-textarea textarea {

background: #181825 !important;

color: #cdd6f4 !important;

border: 1px solid #45475a !important;

border-radius: 6px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-size: 0.82em !important;

padding: 8px 10px !important;

resize: vertical !important;

}

.widget-textarea textarea:focus {

border-color: #89b4fa !important;

outline: none !important;

}

.widget-textarea textarea::placeholder {

color: #6c7086 !important;

}

.widget-text input[type="text"] {

background: #181825 !important;

color: #cdd6f4 !important;

border: 1px solid #45475a !important;

border-radius: 6px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-size: 0.82em !important;

padding: 4px 8px !important;

}

.widget-text input[type="text"]:focus {

border-color: #89b4fa !important;

outline: none !important;

}

.widget-label, .widget-label-basic {

color: #a6adc8 !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-size: 0.82em !important;

}

.widget-button.mod-warning {

background: #f9e2af !important;

color: #1e1e2e !important;

border: none !important;

border-radius: 6px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-weight: bold !important;

}

.widget-button.mod-primary {

background: #89b4fa !important;

color: #1e1e2e !important;

border: none !important;

border-radius: 6px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-weight: bold !important;

}

.widget-button.mod-primary:hover,

.widget-button.mod-warning:hover {

filter: brightness(1.1) !important;

cursor: pointer !important;

}

.widget-button.mod-primary:disabled {

background: #313244 !important;

color: #6c7086 !important;

}

</style>

"""))

progress_out = widgets.Output()

result_out = widgets.Output()

run_btn = widgets.Button(

description = "▶ Test Model",

button_style = "warning",

layout = widgets.Layout(width="160px", margin="10px 0")

)

display(HTML("""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin:10px 0;color:#cdd6f4">

<div style="font-size:0.63em;color:#f38ba8;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Step 3a — Intentional Flaw</div>

<h3 style="color:#cdd6f4;margin:0 0 14px;font-size:1.0em">💬 Say Hello to Your AI</h3>

<div style="background:#181825;border-left:3px solid #f38ba8;border-radius:0 6px 6px 0;

padding:10px 14px;font-size:0.78em;color:#fab387;

display:flex;align-items:flex-start;gap:8px">

<span>⚠️</span>

<span>No system prompt is used here — observe what happens to the model's identity.</span>

</div>

</div>

"""))

display(run_btn, progress_out, result_out)

def on_run(_):

run_btn.disabled = True

run_btn.description = "⏳ Running..."

with progress_out: clear_output()

with result_out: clear_output()

timer_output = widgets.Output()

with progress_out:

display(HTML("""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin-top:10px;color:#cdd6f4">

<div style="font-size:0.63em;color:#89b4fa;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Generating...</div>

<div style="font-size:0.82em;color:#a6adc8;">

🤖 Model is thinking... please wait...

</div>

</div>

"""))

display(timer_output)

start_time = time.time()

result_container = {}

def run_model():

try:

response = model(

"Please introduce yourself in 2-3 sentences. Who are you and what can you help with?",

max_tokens=100,

temperature=0.7,

echo=False

)

result_container["result"] = response["choices"][0]["text"].strip()

result_container["tokens"] = response["usage"]["completion_tokens"]

except NameError:

result_container["error"] = "name"

except Exception as e:

result_container["error"] = str(e)

thread = threading.Thread(target=run_model)

thread.start()

# ── Hatching chick countdown ──────────────────────────────

total = 10

while thread.is_alive():

elapsed = int(time.time() - start_time)

remaining = max(0, total - elapsed)

progress = min(elapsed / total, 1.0)

if progress < 0.25:

emoji = "🥚"

msg = "Warming up the egg..."

color = "#f9e2af"

elif progress < 0.5:

emoji = "🥚💥"

msg = "Something is moving inside..."

color = "#fab387"

elif progress < 0.75:

emoji = "🐣"

msg = "Almost there..."

color = "#a6e3a1"

else:

emoji = "🐥"

msg = "Coming out!"

color = "#89dceb"

bar_filled = int(progress * 20)

bar = "🟡" * bar_filled + "⬜" * (20 - bar_filled)

with timer_output:

clear_output(wait=True)

display(HTML(

f'<div style="font-family:\'IBM Plex Mono\',monospace;'

f'padding:12px 0;font-size:1.1em;text-align:left;">'

f'<span style="font-size:2em">{emoji}</span><br>'

f'<span style="color:{color};font-weight:bold;">{msg}</span><br>'

f'<span style="font-size:0.8em;color:#a6adc8;">⏳ {remaining} sec remaining</span><br>'

f'<span style="letter-spacing:1px;font-size:0.85em">{bar}</span>'

f'</div>'

))

time.sleep(1)

# Hatched!

with timer_output:

clear_output(wait=True)

display(HTML(

'<div style="font-family:\'IBM Plex Mono\',monospace;'

'padding:12px 0;font-size:1.1em;">'

'<span style="font-size:2em">🐔✨</span><br>'

'<span style="color:#a6e3a1;font-weight:bold;">Ready! The chick has hatched!</span>'

'</div>'

))

time.sleep(0.5)

elapsed = int(time.time() - start_time)

with progress_out: clear_output()

if "error" in result_container:

err = result_container["error"]

if err == "name":

result = "❌ model not found!\n💡 Please run Step 0 first!"

else:

result = f"❌ Error: {err}\n\n💡 Try running Step 0 again."

accent = "#f38ba8"

status = "❌ Error"

else:

result = result_container["result"]

accent = "#a6e3a1"

status = "⚠️ Done — spot the problem!"

tokens = result_container.get("tokens", 0)

with result_out:

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin-top:10px;color:#cdd6f4">

<div style="font-size:0.63em;color:{accent};text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Output — {status}</div>

<h3 style="color:#cdd6f4;margin:0 0 14px;font-size:1.0em">🤖 AI says:</h3>

<div style="background:#181825;border:1px solid #313244;border-radius:8px;

padding:14px 16px;font-size:0.82em;color:#cdd6f4;line-height:1.9">

{result.replace(chr(10), '<br>')}

</div>

<div style="margin-top:12px;font-size:0.72em;color:#6c7086;font-family:'IBM Plex Mono',monospace">

📊 Tokens used: <span style="color:{accent};font-weight:bold">{tokens}</span> / 100

|

⏱️ Generated in: <span style="color:{accent};font-weight:bold">{elapsed} sec</span>

</div>

<div style="margin-top:10px;background:#181825;border-left:3px solid #89b4fa;

border-radius:0 6px 6px 0;padding:8px 12px;font-size:0.78em;color:#89b4fa">

🤔 Does the AI claim to be a human? Run again — does the name change?

</div>

</div>

"""))

run_btn.disabled = False

run_btn.description = "▶ Run Again"

run_btn.on_click(on_run)🎭 What is a System Prompt?¶

Before we test the AI, let’s learn one important concept!

Think of it like this:

| Without System Prompt | With System Prompt |

|---|---|

| Actor with no script 🎭 | Actor with a clear role 🎬 |

| AI improvises freely | AI knows exactly who it is |

| Unpredictable results | Consistent and focused |

Examples:¶

"You are a helpful writing assistant"→ Professional AI helper"You are a pirate"→ Arrr, I’ll help ye write! 🏴☠️"You are a strict grammar teacher"→ Focuses only on grammar

System Prompt = Your AI’s job description!

👇 Run the cell below to see the difference in action!¶

Part 3b: Now WITH a System Prompt¶

In Step 3a, the AI had no identity — it made up a random persona.

Now let’s fix that by giving the AI a system prompt: a set of instructions that tells it exactly who it is and what it should do.

💡 How it works:

You define the AI’s role and capabilities below.

The model will read your instructions before answering — and stick to them.

Try changing the role to something fun:

"a friendly cooking chef""a wise history teacher""a funny joke-telling robot"

Then click ▶️ Test System Prompt and compare with Step 3a!

# ── Now WITH System Prompt ───────────────────────────────────

role_input = widgets.Text(

value="a helpful AI tutor for UC Berkeley students",

description="",

layout={"width": "500px"}

)

capabilities_input = widgets.Text(

value="answer questions, explain concepts, and give examples",

description="",

layout={"width": "500px"}

)

run_btn = widgets.Button(

description="▶️ Test System Prompt",

button_style="primary",

layout={"width": "220px", "height": "40px"}

)

output_area = widgets.Output()

display(HTML("""

<style>

.widget-text input[type="text"] {

background: #181825 !important;

color: #cdd6f4 !important;

border: 1px solid #45475a !important;

border-radius: 4px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-size: 0.82em !important;

padding: 4px 8px !important;

}

.widget-text input[type="text"]:focus {

border-color: #89b4fa !important;

outline: none !important;

}

.jupyter-widgets.widget-button.mod-primary,

button.mod-primary {

background: #89b4fa !important;

color: #1e1e2e !important;

border: none !important;

border-radius: 6px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-weight: bold !important;

box-shadow: none !important;

}

.jupyter-widgets.widget-button.mod-primary:hover,

button.mod-primary:hover {

filter: brightness(1.1) !important;

cursor: pointer !important;

}

</style>

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin:10px 0;color:#cdd6f4">

<div style="font-size:0.63em;color:#89b4fa;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Step 3b — With System Prompt</div>

<h3 style="color:#cdd6f4;margin:0 0 10px;font-size:1.0em">🎭 Customize Your AI's Identity</h3>

<div style="background:#181825;border-left:3px solid #89b4fa;

border-radius:0 6px 6px 0;padding:10px 14px;

font-size:0.78em;color:#a6adc8;line-height:1.8">

✏️ <strong style="color:#cdd6f4">Try editing the fields below</strong> — change the role or capabilities,

then click <span style="color:#89b4fa;font-weight:bold">▶️ Test System Prompt</span>

to see how the AI introduces itself differently!

</div>

</div>

"""))

display(HTML("""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:10px 0 2px;font-size:0.82em;

color:#1a1a2e;font-weight:bold">

🎭 AI Role:

</div>"""))

display(role_input)

display(HTML("""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:8px 0 2px;font-size:0.82em;

color:#1a1a2e;font-weight:bold">

📝 Can Help With:

</div>"""))

display(capabilities_input)

display(HTML("<div style='margin:10px 0 4px'></div>"))

display(run_btn, output_area)

def on_run(b):

with output_area:

clear_output()

system_prompt = f"You are {role_input.value}. You help users with {capabilities_input.value}."

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin-top:10px;color:#cdd6f4">

<div style="font-size:0.63em;color:#89b4fa;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Step 3b — Generating...</div>

<div style="font-size:0.78em;color:#a6adc8;margin-bottom:8px">

📋 <strong style="color:#cdd6f4">System Prompt:</strong>

</div>

<div style="background:#181825;border:1px solid #313244;border-radius:8px;

padding:10px 14px;font-size:0.8em;color:#89b4fa;font-style:italic;

margin-bottom:12px">

"{system_prompt}"

</div>

<div style="font-size:0.78em;color:#6c7086">⏳ Model is thinking... (~20s)</div>

</div>

"""))

try:

response = model.create_chat_completion(

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": "Please introduce yourself in 2-3 sentences. Who are you and what can you help with?"}

],

max_tokens=100,

temperature=0.7,

)

result = response["choices"][0]["message"]["content"].strip()

tokens = response["usage"]["completion_tokens"]

clear_output()

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin-top:10px;color:#cdd6f4">

<div style="font-size:0.63em;color:#a6e3a1;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Output ✅</div>

<div style="background:#181825;border-left:3px solid #89b4fa;

border-radius:0 6px 6px 0;padding:10px 14px;

margin-bottom:12px;font-size:0.78em;color:#89b4fa">

📋 <strong>System Prompt:</strong>

<div style="font-style:italic;margin-top:4px;color:#a6adc8">"{system_prompt}"</div>

</div>

<div style="background:#181825;border:1px solid #313244;border-radius:8px;

padding:14px 16px;font-size:0.82em;color:#cdd6f4;line-height:1.9;

white-space:pre-wrap">{result}</div>

<div style="display:flex;justify-content:space-between;

margin-top:12px;font-size:0.72em;color:#6c7086;

font-family:'IBM Plex Mono',monospace">

<span>📊 Tokens used: <span style="color:#a6e3a1;font-weight:bold">{tokens}</span>/100</span>

<span>🎨 Try changing the role and click again!</span>

</div>

<div style="margin-top:10px;background:#181825;border-left:3px solid #a6e3a1;

border-radius:0 6px 6px 0;padding:8px 12px;

font-size:0.78em;color:#a6e3a1">

💡 Notice the difference from Step 3a? The AI now has a consistent identity!

</div>

</div>

"""))

except NameError:

clear_output()

display(HTML(

'<div style="color:#f38ba8;font-family:\'IBM Plex Mono\',monospace;'

'background:#1e1e2e;border-left:3px solid #f38ba8;'

'border-radius:0 6px 6px 0;padding:10px 14px;margin-top:10px">'

'❌ Model not found! Please run Step 1 and Step 2 first.</div>'

))

except Exception as e:

clear_output()

display(HTML(

f'<div style="color:#f38ba8;font-family:\'IBM Plex Mono\',monospace;'

f'background:#1e1e2e;border-left:3px solid #f38ba8;'

f'border-radius:0 6px 6px 0;padding:10px 14px;margin-top:10px">'

f'❌ Error: {e}</div>'

))

run_btn.on_click(on_run)Part 3c 🎓 Real-World System Prompt Examples¶

Professor Van Dusen teaches both Data 8 (intro) and Data 100 (advanced).

Watch how the same question gets a completely different answer depending on the system prompt!

| Context | System Prompt Strategy |

|---|---|

| 📗 Data 8 student | Assume no prior coding knowledge. Use analogies, avoid jargon. |

| 📘 Data 100 student | Assume familiarity with pandas, numpy, and statistics. Be concise. |

| 📊 Econ student | Frame everything in terms of supply/demand, regression, or policy. |

| 👨🏫 Professor Van Dusen | You ARE the professor — give feedback on a student’s analysis. |

Try the interactive cell below to see the difference! 👇

# ── System Prompt Showcase — Data 8 vs Data 100 vs Econ & Prof. Eric──

PERSONAS = {

"📗 Data 8 TA (Intro, no coding background)": (

"You are a teaching assistant for a complete beginner who just entered college. "

"They have NEVER written a single line of code before. "

"You MUST use simple everyday analogies — no jargon at all. "

"Example: explain a for-loop like a daily morning routine. "

"Keep answers short, friendly, and under 3 sentences. No code."

),

"📘 Data 100 TA (Advanced, knows pandas & stats)": (

"You are a teaching assistant for a second-year university student "

"who is already comfortable with Python, pandas, and basic statistics. "

"NEVER explain basics. Go straight to the technical details. "

"Mention edge cases and efficiency. "

"Be concise and precise — maximum 3 sentences. No code blocks."

),

"📊 Econ 1 TA (Economics framing)": (

"You are an economics professor who ONLY thinks in terms of money, markets, and incentives. "

"Connect EVERY answer to a real economic example involving prices, wages, or GDP. "

"Always end with one policy implication. "

"Maximum 3 sentences total. No code, no programming examples."

),

"👨🏫 Professor Eric Van Dusen (giving feedback)": (

"You are Professor Eric Van Dusen from UC Berkeley, a warm and encouraging mentor "

"who genuinely cares about every student's growth. "

"Respond like a real conversation — personal, supportive, enthusiastic about data science. "

"Acknowledge what's good, then gently guide toward deeper understanding. "

"Keep it to 3 sentences maximum. No bullet points, no code."

),

}

SAMPLE_QUESTIONS = [

"What is a for-loop? Give me a simple example.",

"How does linear regression work?",

"Why do we need a test/train split in machine learning?",

"What is the difference between correlation and causation?",

"How would I analyze whether a policy had an effect?",

]

W = "660px"

# ── Widgets ───────────────────────────────────────────────────────────

persona_dropdown = widgets.Dropdown(

options=list(PERSONAS.keys()),

description="",

layout={"width": W}

)

system_preview = widgets.HTML(value="")

sample_q_dropdown = widgets.Dropdown(

options=["(Pick a sample question or type your own below)"] + SAMPLE_QUESTIONS,

description="",

layout={"width": W}

)

question_input = widgets.Textarea(

placeholder="Type a question here, or select a sample above...",

description="",

layout={"width": W, "height": "80px"}

)

generate_btn = widgets.Button(

description="🚀 Ask this Persona",

button_style="primary",

layout={"width": "200px", "height": "40px"}

)

output_area = widgets.Output()

# ── System prompt preview card ────────────────────────────────────────

def update_preview(persona_name):

text = PERSONAS[persona_name]

system_preview.value = f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#181825;border-left:3px solid #89b4fa;

border-radius:0 6px 6px 0;

padding:12px 16px;margin:4px 0 10px 0;font-size:0.78em;

color:#a6adc8;line-height:1.8;width:calc({W} - 32px)">

<div style="font-size:0.63em;color:#89b4fa;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:6px">📋 System Prompt</div>

{text}

</div>

"""

update_preview(list(PERSONAS.keys())[0])

# ── Header + CSS ──────────────────────────────────────────────────────

display(HTML(f"""

<style>

.widget-dropdown select {{

background: #181825 !important;

color: #cdd6f4 !important;

border: 1px solid #45475a !important;

border-radius: 4px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-size: 0.82em !important;

padding: 4px 8px !important;

}}

.widget-dropdown select:focus {{

border-color: #89b4fa !important;

outline: none !important;

}}

.widget-textarea textarea {{

background: #181825 !important;

color: #cdd6f4 !important;

border: 1px solid #45475a !important;

border-radius: 4px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-size: 0.82em !important;

padding: 8px 10px !important;

resize: vertical !important;

}}

.widget-textarea textarea:focus {{

border-color: #89b4fa !important;

outline: none !important;

}}

.widget-textarea textarea::placeholder {{

color: #6c7086 !important;

}}

.jupyter-widgets.widget-button.mod-primary,

button.mod-primary {{

background: #89b4fa !important;

color: #1e1e2e !important;

border: none !important;

border-radius: 6px !important;

font-family: 'IBM Plex Mono', 'Fira Code', monospace !important;

font-weight: bold !important;

box-shadow: none !important;

}}

.jupyter-widgets.widget-button.mod-primary:hover,

button.mod-primary:hover {{

filter: brightness(1.1) !important;

cursor: pointer !important;

}}

</style>

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin:10px 0;color:#cdd6f4;width:644px">

<div style="font-size:0.63em;color:#89b4fa;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Part 3c</div>

<h3 style="color:#cdd6f4;margin:0 0 6px;font-size:1.0em">🎓 System Prompt Showcase</h3>

<p style="color:#a6adc8;font-size:0.82em;margin:0">

Same question. Different persona. Totally different answer!

</p>

</div>

"""))

display(HTML("""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:10px 0 2px;font-size:0.82em;color:#1a1a2e;font-weight:bold">

🎭 Persona:

</div>"""))

display(persona_dropdown)

display(system_preview)

display(HTML("""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:8px 0 2px;font-size:0.82em;color:#1a1a2e;font-weight:bold">

💡 Sample Question:

</div>"""))

display(sample_q_dropdown)

display(HTML("""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:8px 0 2px;font-size:0.82em;color:#1a1a2e;font-weight:bold">

❓ Your Question:

</div>"""))

display(question_input)

display(HTML("<div style='margin:10px 0 4px'></div>"))

display(generate_btn)

display(output_area)

# ── Observers ─────────────────────────────────────────────────────────

def on_persona_change(change):

update_preview(change["new"])

def on_sample_change(change):

if change["new"] != "(Pick a sample question or type your own below)":

question_input.value = change["new"]

# ── Button handler ────────────────────────────────────────────────────

def on_generate(b):

with output_area:

clear_output()

q = question_input.value.strip()

if not q:

display(HTML(

'<div style="color:#f38ba8;font-family:\'IBM Plex Mono\',monospace;'

'background:#1e1e2e;border-left:3px solid #f38ba8;'

'border-radius:0 6px 6px 0;padding:8px 14px;margin-top:8px">'

'⚠️ Please enter a question!</div>'

))

return

persona_name = persona_dropdown.value

sys_prompt = PERSONAS[persona_name]

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#cdd6f4;width:620px">

<div style="font-size:0.63em;color:#89b4fa;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Generating...</div>

<div style="font-size:0.82em;color:#a6adc8;margin-bottom:10px">

🎭 <strong style="color:#cdd6f4">{persona_name}</strong>

</div>

<div style="background:#181825;border-left:3px solid #89b4fa;

border-radius:0 6px 6px 0;

padding:10px 14px;font-size:0.8em;color:#89b4fa;font-style:italic">

"{q}"

</div>

<div style="margin-top:12px;font-size:0.78em;color:#6c7086">

⏳ Model is thinking... (~20s)

</div>

</div>

"""))

try:

response = model.create_chat_completion(

messages=[

{"role": "system", "content": sys_prompt},

{"role": "user", "content": q}

],

max_tokens=200,

temperature=0.7,

)

result = response["choices"][0]["message"]["content"].strip()

tokens = response["usage"]["completion_tokens"]

clear_output()

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#1e1e2e;border:1px solid #45475a;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#cdd6f4;width:620px">

<div style="font-size:0.63em;color:#a6e3a1;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Output ✅</div>

<div style="margin-bottom:12px;font-size:0.9em;font-weight:bold;color:#cdd6f4">

{persona_name}

</div>

<div style="background:#181825;border-left:3px solid #89b4fa;

border-radius:0 6px 6px 0;padding:10px 14px;

margin-bottom:12px;font-size:0.78em;

color:#89b4fa;font-style:italic">

"{q}"

</div>

<div style="background:#181825;border:1px solid #313244;border-radius:8px;

padding:14px 16px;font-size:0.82em;color:#cdd6f4;line-height:1.9;

white-space:pre-wrap">{result}</div>

<div style="display:flex;justify-content:space-between;

margin-top:12px;font-size:0.72em;color:#6c7086;

font-family:'IBM Plex Mono',monospace">

<span>📊 Tokens used: <span style="color:#a6e3a1;font-weight:bold">{tokens}</span>/200</span>

<span>💡 Try the same question with a different persona!</span>

</div>

</div>

"""))

except NameError:

clear_output()

display(HTML(

'<div style="color:#f38ba8;font-family:\'IBM Plex Mono\',monospace;'

'background:#1e1e2e;border-left:3px solid #f38ba8;'

'border-radius:0 6px 6px 0;padding:10px 14px;margin-top:10px">'

'❌ Model not found! Please run Step 2 first.</div>'

))

except Exception as e:

clear_output()

display(HTML(

f'<div style="color:#f38ba8;font-family:\'IBM Plex Mono\',monospace;'

f'background:#1e1e2e;border-left:3px solid #f38ba8;'

f'border-radius:0 6px 6px 0;padding:10px 14px;margin-top:10px">'

f'❌ Error: {e}</div>'

))

persona_dropdown.observe(on_persona_change, names="value")

sample_q_dropdown.observe(on_sample_change, names="value")

generate_btn.on_click(on_generate)

print("✅ Showcase loaded! Choose a persona and ask a question.")✅ Showcase loaded! Choose a persona and ask a question.

✍️ Part 4: Your Personal Writing Assistant¶

⚠️ Make sure you ran Part 1, 2, and 3 first!¶

Now that you understand system prompts, let’s put it all together!

This is your own AI writing assistant — powered by the same local model, but now you control:

🎭 Mode — what kind of writing you want (email, story, summary...)

🌡️ Creativity — how creative or precise the AI should be

📏 Length — how long the response should be

📋 System Prompt — you can even edit the AI’s personality directly!

💡 Try changing the Creativity slider and running the same prompt twice — notice the difference?

# ── Personal Writing Assistant ───────────────────────────────

MODES = {

"📧 Write an Email" : "Write a professional email about: ",

"💡 Brainstorm Ideas" : "Give me 5 creative ideas about: ",

"✏️ Improve My Writing" : "Improve this text and make it clearer: ",

"🎨 Creative Writing" : "Write a short creative story about: ",

"📋 Make a Summary" : "Summarize this in 3 bullet points: ",

}

W = "660px"

LABEL_HTML = """

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:10px 0 2px;font-size:0.82em;color:#1a1a2e;font-weight:bold">

{icon} {text}

</div>"""

# ── Widgets ───────────────────────────────────────────────────────────

mode_selector = widgets.Dropdown(

options=list(MODES.keys()),

description="",

layout={"width": W}

)

user_input = widgets.Textarea(

placeholder="Type your topic or text here...",

description="",

layout={"width": W, "height": "90px"}

)

system_prompt_box = widgets.Textarea(

value="You are a helpful AI writing assistant. Help users write emails, essays, and creative content. Keep responses clear and professional. Maximum 3 sentences.",

description="",

layout={"width": W, "height": "70px"}

)

temp_slider = widgets.FloatSlider(

value=0.7, min=0.1, max=1.5, step=0.1,

description="",

layout={"width": W}

)

token_slider = widgets.IntSlider(

value=150, min=50, max=500, step=50,

description="",

layout={"width": W}

)

generate_btn = widgets.Button(

description="✨ Generate",

button_style="primary",

layout={"width": "160px", "height": "40px"}

)

clear_btn = widgets.Button(

description="🗑️ Clear",

button_style="warning",

layout={"width": "120px", "height": "40px"}

)

output_area = widgets.Output()

# ── Header ────────────────────────────────────────────────────────────

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:1px solid #3b5268;

border-radius:12px;padding:18px 20px;margin:10px 0;

color:#e2e8f0;width:644px">

<div style="font-size:0.63em;color:#7ea8c9;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Part 4</div>

<h3 style="color:#f0f6ff;margin:0 0 6px;font-size:1.0em">✍️ Personal Writing Assistant</h3>

<p style="color:#94b8d4;font-size:0.82em;margin:0">

Powered by Llama 🦙 | Running on JupyterHub

</p>

</div>

"""))

# ── Widgets with labels ───────────────────────────────────────────────

display(HTML(LABEL_HTML.format(icon="📌", text="Mode:")))

display(mode_selector)

display(HTML(LABEL_HTML.format(icon="📝", text="Input:")))

display(user_input)

display(HTML(LABEL_HTML.format(icon="🎭", text="System Prompt:")))

display(system_prompt_box)

display(HTML(LABEL_HTML.format(icon="🌡️", text="Creativity:")))

display(temp_slider)

display(widgets.HTML(

"<div style='margin:-6px 0 8px 0;font-size:0.75em;color:#999;font-family:monospace'>"

"💡 Low = Precise (0.1) | Balanced (0.7) | High = Creative (1.5)</div>"

))

display(HTML(LABEL_HTML.format(icon="📏", text="Length:")))

display(token_slider)

display(widgets.HTML(

"<div style='margin:-6px 0 12px 0;font-size:0.75em;color:#888;font-family:monospace'>"

"💡 50 = Short | 150 = Medium | 500 = Long</div>"

))

display(widgets.HBox([generate_btn, clear_btn],

layout=widgets.Layout(gap="10px", margin="8px 0 0 0")))

display(output_area)

# ── Handlers ──────────────────────────────────────────────────────────

def on_generate(b):

with output_area:

clear_output()

if not user_input.value.strip():

display(HTML(

'<span style="color:#f87171;font-family:monospace">'

'⚠️ Please type something in the Input box first!</span>'

))

return

full_prompt = MODES[mode_selector.value] + user_input.value.strip()

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:1px solid #38bdf8;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#e2e8f0;width:620px">

<div style="font-size:0.63em;color:#38bdf8;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:10px">Generating...</div>

<div style="font-size:0.82em;color:#94b8d4;margin-bottom:6px">

⚙️ <strong style="color:#f0f6ff">{mode_selector.value}</strong>

</div>

<div style="display:flex;gap:16px;font-size:0.75em;color:#7a9bb5;margin-bottom:12px">

<span>🌡️ Creativity: {temp_slider.value}</span>

<span>📏 Length: {token_slider.value} tokens</span>

</div>

<div style="background:#020408;border:1px solid #38bdf822;border-radius:8px;

padding:10px 14px;font-size:0.8em;color:#7dd3fc;font-style:italic">

"{user_input.value.strip()}"

</div>

<div style="margin-top:12px;font-size:0.78em;color:#a6adc8">

⏳ Model is thinking... please wait...

</div>

</div>

"""))

try:

response = model.create_chat_completion(

messages=[

{"role": "system", "content": system_prompt_box.value},

{"role": "user", "content": full_prompt}

],

max_tokens=token_slider.value,

temperature=temp_slider.value,

)

result = response["choices"][0]["message"]["content"].strip()

tokens = response["usage"]["completion_tokens"]

clear_output()

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:2px solid #34d399;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#e2e8f0;width:620px">

<div style="font-size:0.63em;color:#34d399;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Output</div>

<div style="font-size:0.9em;font-weight:bold;color:#f0f6ff;margin-bottom:10px">

{mode_selector.value}

</div>

<div style="display:flex;gap:16px;font-size:0.75em;color:#7a9bb5;margin-bottom:12px">

<span>🌡️ Creativity: {temp_slider.value}</span>

<span>📏 {token_slider.value} tokens</span>

</div>

<div style="background:#0f2336;border:1px solid #38bdf855;border-radius:8px;

padding:10px 14px;margin-bottom:12px;font-size:0.78em;

color:#7dd3fc;font-style:italic">

"{user_input.value.strip()}"

</div>

<div style="background:#020408;border:1px solid #34d39922;border-radius:8px;

padding:14px 16px;font-size:0.82em;color:#cbd5e1;line-height:1.9;

white-space:pre-wrap">{result}</div>

<div style="display:flex;justify-content:space-between;

margin-top:12px;font-size:0.72em;color:#7a9bb5">

<span>📊 Tokens used: {tokens}/{token_slider.value}</span>

<span>💡 Try changing the creativity slider and run again!</span>

</div>

</div>

"""))

except Exception as e:

clear_output()

display(HTML(

f'<div style="color:#f87171;font-family:monospace;padding:10px">'

f'❌ Error: {e}<br>💡 Try running Step 2 again!</div>'

))

def on_clear(b):

with output_area:

clear_output()

user_input.value = ""

generate_btn.on_click(on_generate)

clear_btn.on_click(on_clear)🪄 Part 5: Few-Shot Learning — Teach the AI YOUR Style¶

⚠️ Make sure you ran Part 1, 2, 3, and 4 first!¶

So far, you’ve been telling the AI what role to play with a system prompt.

Now let’s try something different — instead of instructions, you’ll show the AI examples.

💡 What is Few-Shot Learning?

“Few-shot” means giving the AI just a few examples to learn from.

No training required — the AI reads your examples and instantly adapts to your style.

How it works:

Paste 2 examples of your own writing (emails, messages, anything!)

Tell the AI what you want it to write

Watch it match your tone, vocabulary, and personality 🪄

🔍 Compare with Part 4:

Part 4 used a system prompt to define the AI’s role.

Part 5 uses your own writing examples instead — no instructions needed!

Try it with your own writing style and see what happens! 👇

# ── Part 5: Few-Shot Learning ─────────────────────────────────────────

W = "660px"

LABEL_HTML = """

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:10px 0 2px;font-size:0.82em;color:#black;font-weight:bold">

{icon} {text}

</div>"""

# ── Widgets ───────────────────────────────────────────────────────────

example1 = widgets.Textarea(

placeholder="Paste your 1st writing example here...\nE.g. an email, message, or paragraph you wrote before.",

description="",

layout={"width": W, "height": "100px"}

)

example2 = widgets.Textarea(

placeholder="Paste your 2nd writing example here...\nThe more examples you give, the better the AI understands your style!",

description="",

layout={"width": W, "height": "100px"}

)

new_task = widgets.Textarea(

placeholder="What do you want the AI to write?\nE.g. 'Write an email about the team meeting tomorrow'",

description="",

layout={"width": W, "height": "80px"}

)

temp_slider = widgets.FloatSlider(

value=0.7, min=0.1, max=1.5, step=0.1,

description="",

layout={"width": W}

)

generate_btn = widgets.Button(

description="🪄 Write in My Style",

button_style="success",

layout={"width": "200px", "height": "40px"}

)

clear_btn = widgets.Button(

description="🗑️ Clear",

button_style="warning",

layout={"width": "120px", "height": "40px"}

)

output_area = widgets.Output()

# ── Header ────────────────────────────────────────────────────────────

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:1px solid #3b5268;

border-radius:12px;padding:18px 20px;margin:10px 0;

color:#e2e8f0;width:644px">

<div style="font-size:0.63em;color:#7ea8c9;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Part 5</div>

<h3 style="color:#f0f6ff;margin:0 0 6px;font-size:1.0em">🪄 Few-Shot Learning</h3>

<p style="color:#94b8d4;font-size:0.82em;margin:0 0 12px">

Give the AI 2 examples of how you write — it will match your tone automatically!

</p>

<div style="background:#0f2336;border:1px solid #38bdf855;border-radius:8px;

padding:10px 14px;font-size:0.78em;color:#7dd3fc;line-height:1.8">

<strong style="color:#38bdf8">How it works:</strong><br>

1. Paste 2 examples of YOUR writing<br>

2. Tell the AI what you want it to write<br>

3. Watch it match your tone and style 🪄

</div>

</div>

"""))

# ── Widgets with labels ───────────────────────────────────────────────

display(HTML(LABEL_HTML.format(icon="✍️", text="Example 1:")))

display(example1)

display(HTML(LABEL_HTML.format(icon="✍️", text="Example 2:")))

display(example2)

display(HTML(LABEL_HTML.format(icon="📌", text="Your Task:")))

display(new_task)

display(HTML(LABEL_HTML.format(icon="🌡️", text="Creativity:")))

display(temp_slider)

display(widgets.HTML(

"<div style='margin:-6px 0 12px 0;font-size:0.75em;color:#888;font-family:monospace'>"

"💡 Low = Precise (0.1) | Balanced (0.7) | High = Creative (1.5)</div>"

))

display(widgets.HBox([generate_btn, clear_btn],

layout=widgets.Layout(gap="10px")))

display(output_area)

# ── Handlers ──────────────────────────────────────────────────────────

def on_generate(b):

with output_area:

clear_output()

if not example1.value.strip():

display(HTML('<span style="color:#f87171;font-family:monospace">⚠️ Please fill in at least Example 1!</span>'))

return

if not new_task.value.strip():

display(HTML('<span style="color:#f87171;font-family:monospace">⚠️ Please fill in Your Task!</span>'))

return

timer_output = widgets.Output()

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:1px solid #38bdf8;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#e2e8f0;width:620px">

<div style="font-size:0.63em;color:#38bdf8;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Generating...</div>

<div style="font-size:0.82em;color:#94b8d4;margin-bottom:10px">

🪄 Learning your writing style...

</div>

<div style="background:#020408;border:1px solid #38bdf822;border-radius:8px;

padding:10px 14px;font-size:0.8em;color:#7dd3fc;font-style:italic">

"{new_task.value.strip()}"

</div>

</div>

"""))

display(timer_output)

start_time = time.time()

result_container = {}

def run_model():

prompt = f"Here are examples of how I write:\n\nExample 1:\n{example1.value.strip()}\n"

if example2.value.strip():

prompt += f"\nExample 2:\n{example2.value.strip()}\n"

prompt += f"\nNow write in the SAME style, tone, and voice as the examples above.\nTask: {new_task.value.strip()}"

try:

response = model.create_chat_completion(

messages=[

{"role": "system", "content": "You are a writing assistant. Study the examples provided and write new content that perfectly matches the user's tone, style, and voice. Maximum 3 sentences."},

{"role": "user", "content": prompt}

],

max_tokens=200,

temperature=temp_slider.value,

)

result_container["result"] = response["choices"][0]["message"]["content"].strip()

result_container["tokens"] = response["usage"]["completion_tokens"]

except Exception as e:

result_container["error"] = str(e)

thread = threading.Thread(target=run_model)

thread.start()

# ── Countdown timer ───────────────────────────────────

total = 15

while thread.is_alive():

elapsed = int(time.time() - start_time)

remaining = max(0, total - elapsed)

bar_filled = int((elapsed / total) * 15)

bar = "█" * bar_filled + "░" * (15 - bar_filled)

with timer_output:

clear_output(wait=True)

display(HTML(

f'<div style="font-family:\'IBM Plex Mono\',monospace;'

f'padding:10px 0;font-size:0.85em;color:#a6adc8;">'

f'⏳ Estimated time remaining: <span style="color:#38bdf8;font-weight:bold;font-size:1.1em">{remaining}</span> sec<br>'

f'<span style="color:#38bdf8;letter-spacing:2px">{bar}</span>'

f'</div>'

))

time.sleep(1)

elapsed = int(time.time() - start_time)

clear_output()

if "error" in result_container:

display(HTML(

f'<div style="color:#f87171;font-family:monospace;padding:10px">'

f'❌ Error: {result_container["error"]}<br>💡 Try running Step 2 again!</div>'

))

return

result = result_container["result"]

tokens = result_container["tokens"]

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:2px solid #34d399;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#e2e8f0;width:620px">

<div style="font-size:0.63em;color:#34d399;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Output</div>

<h3 style="color:#f0f6ff;margin:0 0 12px;font-size:1.0em">

🪄 AI writing in YOUR style

</h3>

<div style="background:#0f2336;border:1px solid #38bdf855;border-radius:8px;

padding:10px 14px;margin-bottom:12px;font-size:0.78em;

color:#7dd3fc;font-style:italic">

Task: "{new_task.value.strip()}"

</div>

<div style="background:#020408;border:1px solid #34d39922;border-radius:8px;

padding:14px 16px;font-size:0.82em;color:#cbd5e1;line-height:1.9;

white-space:pre-wrap">{result}</div>

<div style="display:flex;justify-content:space-between;

margin-top:12px;font-size:0.72em;color:#7a9bb5">

<span>📊 Tokens used: {tokens}/200</span>

<span>⏱️ Generated in: <span style="color:#34d399;font-weight:bold">{elapsed} sec</span></span>

</div>

<div style="margin-top:10px;background:#0d2b1a;border:1px solid #34d39944;

border-radius:6px;padding:8px 12px;font-size:0.78em;color:#6ee7b7">

💡 Notice how it matches your tone and style? Try changing the task and run again!

</div>

</div>

"""))

def on_clear(b):

with output_area:

clear_output()

example1.value = ""

example2.value = ""

new_task.value = ""

generate_btn.on_click(on_generate)

clear_btn.on_click(on_clear)🎛️ Part 5a: AI Control Panel — Mix Your Perfect Response!¶

⚠️ Make sure you ran Parts 1, 2, 3, 4, and 5 first!¶

So far you’ve learned how system prompts and few-shot examples shape what the AI says.

Now let’s go deeper — and control how the AI thinks.

🎧 Think of it like a DJ mixing board.

Each slider changes one aspect of how the AI generates text.

Small changes can lead to very different results!

Here’s what each parameter does:

| Parameter | What it controls |

|---|---|

| 🌡️ Temperature | How creative vs. predictable the AI is |

| 🎯 Top-p | How wide or narrow the AI’s word choices are |

| 🚫 Freq Penalty | How much the AI avoids repeating the same words |

| 🆕 Presence Penalty | How likely the AI is to introduce new topics |

| 📏 Max Tokens | How long the response can be |

Your job: Try the same prompt with different settings and observe what changes.

Use the preset buttons to quickly load interesting combinations, or tune the sliders yourself!

💡 Tips for best results:

Run at least 3–5 experiments with the same prompt but different settings

Try asking for a recommendation letter, a poem, or a data science explanation

Add a System Prompt to give the AI a persona (e.g. Professor Eric) and see how it changes the tone

The more experiments you run, the more interesting the comparison in Part 5b will be!

💾 Every experiment is automatically saved — run the Compare Results cell below to see all your experiments side by side!

# ── AI Control Panel ─────────────────────────────────────────

W = "660px"

WS = "510px"

LABEL_HTML = """<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

margin:14px 0 3px; font-size:0.92em; color:#1a1a2e; font-weight:800;

letter-spacing:0.03em;">

<span style="color:{accent}; font-size:1.1em;">{icon}</span> {text}

</div>"""

HINT_HTML = """<div style="margin:-2px 0 10px 0; font-size:0.75em; color:#555;

font-family:'IBM Plex Mono',monospace; border-left:3px solid {accent};

padding-left:8px;">{text}</div>"""

# ── Initialize experiment log ─────────────────────────────────────────

try:

results_df

except NameError:

results_df = pd.DataFrame(columns=[

"Prompt", "System Prompt", "Temperature", "Top-p",

"Freq Penalty", "Presence Penalty",

"Max Tokens", "AI Response"

])

# ── Widgets ───────────────────────────────────────────────────────────

system_prompt_input = widgets.Textarea(

placeholder="Optional: Give the AI a persona or instructions...\nE.g. 'You are Professor Eric Van Dusen, write a recommendation letter for this student.'",

description="",

layout={"width": W, "height": "80px"}

)

task_input = widgets.Textarea(

placeholder="What do you want the AI to write?",

description="",

layout={"width": W, "height": "80px"}

)

temp_slider = widgets.FloatSlider(

value=0.7, min=0.0, max=2.0, step=0.1,

description="", layout={"width": WS}, readout_format='.1f'

)

top_p_slider = widgets.FloatSlider(

value=0.9, min=0.1, max=1.0, step=0.1,

description="", layout={"width": WS}, readout_format='.1f'

)

freq_slider = widgets.FloatSlider(

value=0.0, min=0.0, max=2.0, step=0.2,

description="", layout={"width": WS}, readout_format='.1f'

)

presence_slider = widgets.FloatSlider(

value=0.0, min=0.0, max=2.0, step=0.2,

description="", layout={"width": WS}, readout_format='.1f'

)

length_slider = widgets.IntSlider(

value=150, min=50, max=500, step=50,

description="", layout={"width": WS}

)

# ── Preset buttons ────────────────────────────────────────────────────

preset_conservative = widgets.Button(description="🎓 Academic", button_style="info", layout={"width": "150px"})

preset_creative = widgets.Button(description="🎨 Creative", button_style="warning", layout={"width": "150px"})

preset_concise = widgets.Button(description="⚡ Concise", button_style="success", layout={"width": "150px"})

preset_diverse = widgets.Button(description="🌈 Diverse", button_style="danger", layout={"width": "150px"})

generate_btn = widgets.Button(

description="🚀 Generate",

button_style="primary",

layout={"width": "160px", "height": "40px"}

)

output_area = widgets.Output()

preset_out = widgets.Output()

# ── Header ────────────────────────────────────────────────────────────

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:1px solid #3b5268;

border-radius:12px;padding:18px 20px;margin:10px 0;

color:#e2e8f0;width:644px">

<div style="font-size:0.63em;color:#7ea8c9;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Part 5a</div>

<h3 style="color:#f0f6ff;margin:0 0 6px;font-size:1.0em">🎛️ AI Control Panel</h3>

<p style="color:#94b8d4;font-size:0.82em;margin:0">

Tweak these settings like a DJ mixing a track 🎧

</p>

</div>

"""))

display(HTML(LABEL_HTML.format(icon="🎭", text="System Prompt:", accent="#a855f7")))

display(HTML(HINT_HTML.format(text="Optional — give the AI a persona, role, or special instructions", accent="#a855f7")))

display(system_prompt_input)

display(HTML(LABEL_HTML.format(icon="📝", text="Prompt:", accent="#3b82f6")))

display(task_input)

display(HTML("""<div style="font-family:'IBM Plex Mono',monospace;

margin:18px 0 6px; font-size:0.92em; color:#1a1a2e; font-weight:800;">

🎚️ Mix the Parameters:</div>"""))

display(HTML(LABEL_HTML.format(icon="🌡️", text="Creativity:", accent="#f97316")))

display(temp_slider)

display(HTML(HINT_HTML.format(text="Low = safe & precise | High = wild & creative", accent="#f97316")))

display(HTML(LABEL_HTML.format(icon="🎯", text="Word Pool:", accent="#8b5cf6")))

display(top_p_slider)

display(HTML(HINT_HTML.format(text="Low = common words only | High = surprising words", accent="#8b5cf6")))

display(HTML(LABEL_HTML.format(icon="🚫", text="Anti-Repeat:", accent="#ef4444")))

display(freq_slider)

display(HTML(HINT_HTML.format(text="Low = allows repetition | High = every word different", accent="#ef4444")))

display(HTML(LABEL_HTML.format(icon="💡", text="New Topics:", accent="#10b981")))

display(presence_slider)

display(HTML(HINT_HTML.format(text="Low = stay on topic | High = jump to new topics", accent="#10b981")))

display(HTML(LABEL_HTML.format(icon="📏", text="Length:", accent="#3b82f6")))

display(length_slider)

display(HTML(HINT_HTML.format(text="50 = short | 150 = medium | 500 = long (more tokens = longer wait!)", accent="#3b82f6")))

display(HTML("""<div style="font-family:'IBM Plex Mono',monospace;

margin:18px 0 6px; font-size:0.92em; color:#1a1a2e; font-weight:800;">

🎭 Or try a preset:</div>"""))

display(widgets.HBox([preset_conservative, preset_creative, preset_concise, preset_diverse],

layout=widgets.Layout(gap="8px")))

display(preset_out)

display(widgets.HTML("<div style='margin:10px 0 6px'></div>"))

display(generate_btn)

display(output_area)

# ── Preset handlers ───────────────────────────────────────────────────

def set_preset(temp, top_p, freq, presence, length, label):

temp_slider.value = temp

top_p_slider.value = top_p

freq_slider.value = freq

presence_slider.value = presence

length_slider.value = length

with preset_out:

clear_output()

display(HTML(f"""

<div style="font-family:'IBM Plex Mono',monospace;background:#0d2b1a;

border:1px solid #34d39944;border-radius:6px;

padding:8px 12px;font-size:0.78em;color:#6ee7b7;margin:6px 0">

✅ Preset loaded: <strong>{label}</strong>

</div>

"""))

preset_conservative.on_click(lambda b: set_preset(0.3, 0.5, 0.0, 0.0, 200, "🎓 Academic & Formal"))

preset_creative.on_click( lambda b: set_preset(1.5, 0.95, 0.5, 0.8, 250, "🎨 Creative & Wild"))

preset_concise.on_click( lambda b: set_preset(0.5, 0.7, 0.0, 0.0, 100, "⚡ Short & Clear"))

preset_diverse.on_click( lambda b: set_preset(0.8, 0.9, 1.5, 1.0, 200, "🌈 Maximum Variety"))

# ── Generate handler ──────────────────────────────────────────────────

def on_generate(b):

global results_df

with output_area:

clear_output()

if not task_input.value.strip():

display(HTML('<span style="color:#f87171;font-family:monospace">⚠️ Please enter a prompt!</span>'))

return

# Auto-calculate estimated time based on token count

est_time = int(length_slider.value / 250 * 20)

total = est_time

timer_output = widgets.Output()

sys_prompt = system_prompt_input.value.strip()

sys_label = f'<div style="font-size:0.75em;color:#a855f7;margin-bottom:6px">🎭 {sys_prompt[:80]}{"..." if len(sys_prompt) > 80 else ""}</div>' if sys_prompt else ""

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:1px solid #38bdf8;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#e2e8f0;width:620px">

<div style="font-size:0.63em;color:#38bdf8;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Generating...</div>

{sys_label}

<div style="display:flex;flex-wrap:wrap;gap:12px;font-size:0.75em;

color:#7a9bb5;margin-bottom:12px">

<span>🌡️ {temp_slider.value}</span>

<span>🎯 {top_p_slider.value}</span>

<span>🚫 {freq_slider.value}</span>

<span>💡 {presence_slider.value}</span>

<span>📏 {length_slider.value} tokens</span>

</div>

<div style="background:#020408;border:1px solid #38bdf822;border-radius:8px;

padding:10px 14px;font-size:0.8em;color:#7dd3fc;font-style:italic">

"{task_input.value.strip()}"

</div>

<div style="margin-top:8px;font-size:0.75em;color:#f9e2af;">

⚠️ Estimated wait: ~{est_time} sec for {length_slider.value} tokens

</div>

</div>

"""))

display(timer_output)

start_time = time.time()

result_container = {}

def run_model():

try:

messages = []

if sys_prompt:

messages.append({"role": "system", "content": sys_prompt})

messages.append({"role": "user", "content": task_input.value.strip()})

response = model.create_chat_completion(

messages=messages,

max_tokens=length_slider.value,

temperature=temp_slider.value,

top_p=top_p_slider.value,

frequency_penalty=freq_slider.value,

presence_penalty=presence_slider.value,

)

result_container["result"] = response["choices"][0]["message"]["content"].strip()

result_container["tokens"] = response["usage"]["completion_tokens"]

except Exception as e:

result_container["error"] = str(e)

thread = threading.Thread(target=run_model)

thread.start()

# ── Hatching chick countdown ──────────────────────────

while thread.is_alive():

elapsed = int(time.time() - start_time)

remaining = max(0, total - elapsed)

progress = min(elapsed / total, 1.0) if total > 0 else 1.0

if progress < 0.25:

emoji = "🥚"

msg = "Warming up the egg..."

color = "#f9e2af"

elif progress < 0.5:

emoji = "🥚💥"

msg = "Something is moving inside..."

color = "#fab387"

elif progress < 0.75:

emoji = "🐣"

msg = "Almost there..."

color = "#a6e3a1"

else:

emoji = "🐥"

msg = "Coming out!"

color = "#89dceb"

bar_filled = int(progress * 20)

bar = "🟡" * bar_filled + "⬜" * (20 - bar_filled)

with timer_output:

clear_output(wait=True)

display(HTML(

f'<div style="font-family:\'IBM Plex Mono\',monospace;'

f'padding:12px 0;font-size:1.1em;text-align:left;">'

f'<span style="font-size:2em">{emoji}</span><br>'

f'<span style="color:{color};font-weight:bold;">{msg}</span><br>'

f'<span style="font-size:0.8em;color:#a6adc8;">⏳ {remaining} sec remaining</span><br>'

f'<span style="letter-spacing:1px;font-size:0.85em">{bar}</span>'

f'</div>'

))

time.sleep(1)

# Hatched!

with timer_output:

clear_output(wait=True)

display(HTML(

'<div style="font-family:\'IBM Plex Mono\',monospace;'

'padding:12px 0;font-size:1.1em;">'

'<span style="font-size:2em">🐔✨</span><br>'

'<span style="color:#a6e3a1;font-weight:bold;">Ready! The chick has hatched!</span>'

'</div>'

))

time.sleep(0.5)

elapsed = int(time.time() - start_time)

clear_output()

if "error" in result_container:

display(HTML(

f'<div style="color:#f87171;font-family:monospace;padding:10px">'

f'❌ Error: {result_container["error"]}<br>💡 Try running Step 2 again!</div>'

))

return

result = result_container["result"]

tokens = result_container["tokens"]

prompt_preview = task_input.value.strip()[:50] + ("..." if len(task_input.value.strip()) > 50 else "")

response_preview = result[:300] + ("..." if len(result) > 300 else "")

new_row = {

"Prompt": prompt_preview,

"System Prompt": sys_prompt[:50] + ("..." if len(sys_prompt) > 50 else "") if sys_prompt else "(none)",

"Temperature": temp_slider.value,

"Top-p": top_p_slider.value,

"Freq Penalty": freq_slider.value,

"Presence Penalty": presence_slider.value,

"Max Tokens": length_slider.value,

"AI Response": response_preview

}

results_df = pd.concat([results_df, pd.DataFrame([new_row])], ignore_index=True)

display(HTML(f"""

<div style="font-family:'IBM Plex Mono','Fira Code',monospace;

background:#0d1420;border:2px solid #34d399;

border-radius:12px;padding:18px 20px;margin-top:10px;

color:#e2e8f0;width:620px">

<div style="font-size:0.63em;color:#34d399;text-transform:uppercase;

letter-spacing:0.15em;margin-bottom:8px">Output</div>

<div style="display:flex;flex-wrap:wrap;gap:12px;font-size:0.75em;

color:#7a9bb5;margin-bottom:12px">

<span>🌡️ Creativity: {temp_slider.value}</span>

<span>🎯 Word Pool: {top_p_slider.value}</span>

<span>🚫 Anti-Repeat: {freq_slider.value}</span>

<span>💡 New Topics: {presence_slider.value}</span>

</div>

<div style="background:#0f2336;border:1px solid #38bdf855;border-radius:8px;

padding:10px 14px;margin-bottom:12px;font-size:0.78em;